Meta Terminates Contractors After Reports of Inappropriate Content from Ray-Ban Meta Users

Updated May 2, 2026

Meta has terminated a group of Kenyan contractors who reported witnessing users of Ray-Ban Meta glasses engaging in sexual activities. The company stated that these contractors did not meet their standards, leading to their dismissal. This decision raises questions about content moderation practices and the implications for user privacy and safety.

Sources reviewed

1

Linked below for direct verification.

Official sources

0

Preferred when available.

Review status

Human reviewed

AI-assisted draft, editor-approved publish.

Confidence

High confidence

85/100 from the draft pipeline.

This AI Signal brief is meant to save busy builders time: what changed, why it matters, and where the reporting comes from.

This story appears to rely mostly on secondary or mixed-source reporting, so readers should treat it as a developing summary rather than a final word. If you spot an issue, email [email protected] or read our editorial standards.

Share this story

Why it matters

- ✓Developers and product teams must consider the ethical implications of augmented reality (AR) technologies and user behavior, as this incident highlights potential misuse.

- ✓The incident underscores the importance of robust content moderation frameworks, which are critical for maintaining user trust in AR applications.

- ✓Businesses developing AR products may need to implement stricter guidelines and training for contractors and employees involved in content moderation.

Meta Terminates Contractors After Reports of Inappropriate Content from Ray-Ban Meta Users

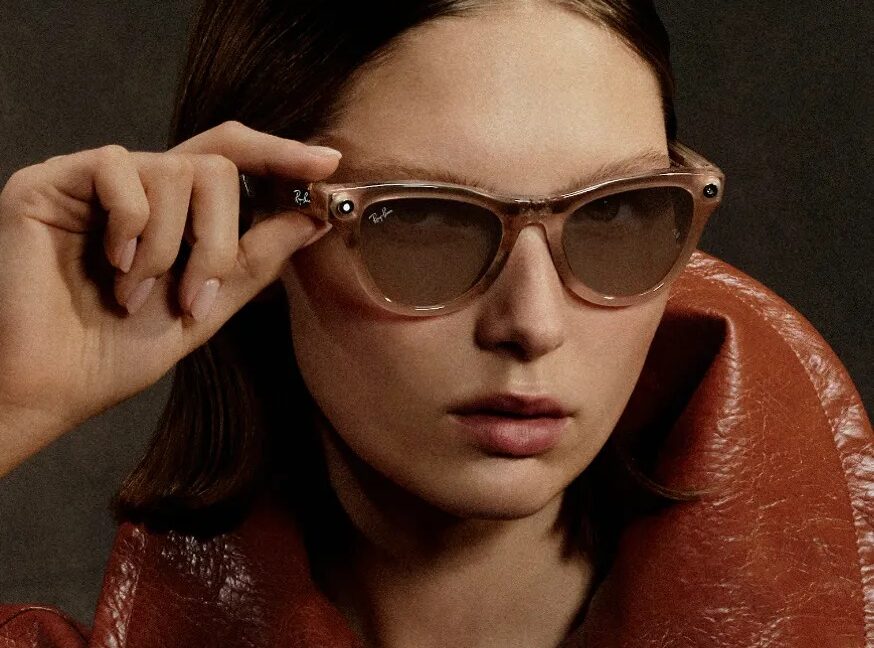

Meta has recently made headlines by terminating a group of Kenyan contractors who reported witnessing inappropriate behavior by users of Ray-Ban Meta glasses. The company stated that these contractors did not meet its standards, raising significant concerns about content moderation practices and user safety in augmented reality (AR) environments.

What happened

According to a report by Ars Technica, a group of contractors based in Kenya was let go by Meta after they reported seeing users of the Ray-Ban Meta glasses engaging in sexual activities. The contractors were responsible for moderating content related to the use of these smart glasses, which integrate augmented reality features. Meta's decision to terminate these workers has sparked discussions about the company's content moderation policies and the challenges of overseeing user-generated content in real-time.

Meta's official statement indicated that the contractors did not meet the company's standards, although specific details regarding these standards were not disclosed. This lack of transparency raises questions about the criteria used to evaluate contractors and the overall effectiveness of content moderation in AR applications.

Why it matters

The termination of these contractors is significant for several reasons:

- Ethical Considerations: Developers and product teams must grapple with the ethical implications of AR technologies. The incident highlights how users can misuse these devices, prompting a need for better safeguards and user education.

- Content Moderation Frameworks: The situation underscores the necessity for robust content moderation frameworks. As AR technologies become more prevalent, maintaining user trust will depend on effective moderation practices that can address inappropriate behavior swiftly.

- Guidelines for Contractors: Businesses involved in developing AR products may need to implement stricter guidelines and training for contractors and employees engaged in content moderation. This incident serves as a reminder of the complexities involved in managing user-generated content and the potential risks associated with it.

Context and caveats

The sourcing for this incident is limited to a single report from Ars Technica, which provides an overview of the situation but lacks detailed insights into Meta's internal policies or the specific circumstances surrounding the contractors' reports. This limitation means that while the incident is concerning, the full context and implications may not be entirely clear.

Moreover, the broader implications for the AR industry are still unfolding. As more companies venture into AR technologies, the lessons learned from this incident could shape future content moderation practices and user engagement strategies.

What to watch next

As the AR landscape evolves, it will be essential to monitor how companies like Meta address the challenges of content moderation and user safety. Key areas to watch include:

- Policy Changes: Look for updates from Meta regarding any changes to their content moderation policies or contractor guidelines in response to this incident.

- Industry Standards: Pay attention to how other companies in the AR space respond to similar challenges and whether they adopt new standards for content moderation.

- User Education Initiatives: Observe if there are any initiatives aimed at educating users about appropriate behavior while using AR technologies, which could help mitigate misuse.

In conclusion, the termination of the contractors by Meta highlights critical issues surrounding content moderation in augmented reality. As the industry continues to grow, it will be vital for developers and product teams to prioritize user safety and ethical considerations in their designs and policies.

Sources

- Meta cuts contractors who reported seeing Ray-Ban Meta users have sex — Ars Technica AI

Comments

Log in with

Loading comments…

More in Business

Influencers Funded to Frame Chinese AI as Threat by Super PAC Linked to OpenAI Executives

A nonprofit called Build American AI, associated with a super PAC funded by executives from OpenAI…

8h ago

Meta Acquires Assured Robot Intelligence to Enhance Humanoid AI Capabilities

Meta has acquired the robotics startup Assured Robot Intelligence to strengthen its AI models for…

8h ago

Replit's Amjad Masad Discusses Cursor Deal and Company Independence

At the TechCrunch StrictlyVC event, Replit CEO Amjad Masad addressed the ongoing discussions…

8h ago

Christian Content Creators Turn to Fiverr for AI-Generated Bible Videos

Christian content creators are increasingly outsourcing the production of AI-generated videos and…

14h ago