The Rise of Tokenmaxxing and Its Implications for AI Development

Updated April 17, 2026

The concept of 'tokenmaxxing' is gaining traction as the gap between AI insiders and the general public widens. OpenAI's aggressive acquisitions, including finance apps and media properties, highlight a trend where companies are rapidly expanding their AI capabilities. This shift raises questions about the sustainability and transparency of such developments in the AI landscape.

Sources reviewed

2

Linked below for direct verification.

Official sources

0

Preferred when available.

Review status

Human reviewed

AI-assisted draft, editor-approved publish.

Confidence

High confidence

85/100 from the draft pipeline.

This AI Signal brief is meant to save busy builders time: what changed, why it matters, and where the reporting comes from.

This story appears to rely mostly on secondary or mixed-source reporting, so readers should treat it as a developing summary rather than a final word. If you spot an issue, email [email protected] or read our editorial standards.

Share this story

Why it matters

- ✓Developers may need to adapt to a rapidly changing AI ecosystem where established players are consolidating resources and capabilities.

- ✓Product teams could face challenges in differentiating their offerings as major companies dominate the market with extensive acquisitions.

- ✓The growing divide between AI insiders and the public may lead to increased scrutiny and demand for transparency in AI technologies, impacting how products are developed and marketed.

The Rise of Tokenmaxxing and Its Implications for AI Development

The term 'tokenmaxxing' is emerging in discussions about the current state of AI, particularly as the gap between AI insiders and the general public continues to widen. OpenAI's recent spree of acquisitions, which includes everything from finance applications to media ventures, exemplifies a trend where companies are rapidly expanding their AI capabilities. This article explores the implications of these developments for developers, builders, operators, and product teams in the AI landscape.

What happened

According to a recent TechCrunch article, the phenomenon of tokenmaxxing reflects a growing divide in the AI community. As major players like OpenAI aggressively acquire various technologies and platforms, the vocabulary and concerns surrounding AI are evolving. For instance, a well-known shoe company has rebranded itself as an AI infrastructure provider, indicating a shift in how companies are positioning themselves in the market. Additionally, Anthropic has announced a new AI model that it claims is too powerful to release publicly, further complicating the landscape of AI development and deployment.

Why it matters

The implications of these developments are significant for those involved in AI:

- Adapting to a changing ecosystem: Developers may need to adjust their strategies as established companies consolidate resources and capabilities, potentially limiting opportunities for smaller players.

- Market differentiation challenges: Product teams could struggle to differentiate their offerings in a market increasingly dominated by major acquisitions, making it essential to innovate and find unique value propositions.

- Demand for transparency: The widening gap between AI insiders and the general public may lead to greater scrutiny and a push for transparency in AI technologies. This could affect how products are developed, marketed, and regulated, necessitating a focus on ethical considerations and user trust.

Context and caveats

While the term tokenmaxxing is gaining traction, the sourcing around its implications remains limited. The discussions primarily stem from observations about OpenAI's acquisitions and Anthropic's model announcements. As the AI landscape continues to evolve, it is crucial for stakeholders to remain informed about these trends and their potential impacts.

What to watch next

As the trend of tokenmaxxing unfolds, several key areas warrant attention:

- Regulatory responses: Watch for potential regulatory actions aimed at addressing the implications of AI consolidation and the need for transparency in AI technologies.

- Emerging competitors: Keep an eye on how smaller companies and startups respond to the dominance of major players, particularly in terms of innovation and market positioning.

- Public perception: Monitor how public sentiment towards AI evolves, especially in light of concerns about transparency and the ethical implications of powerful AI models.

In conclusion, the rise of tokenmaxxing presents both challenges and opportunities for developers, builders, operators, and product teams. By staying informed and adaptable, stakeholders can navigate this rapidly changing landscape and contribute to a more transparent and equitable AI future.

Sources

- Tokenmaxxing, OpenAI’s shopping spree, and the AI Anxiety Gap — TechCrunch AI

- Are we tokenmaxxing our way to nowhere? — TechCrunch AI

Comments

Log in with

Loading comments…

More in Business

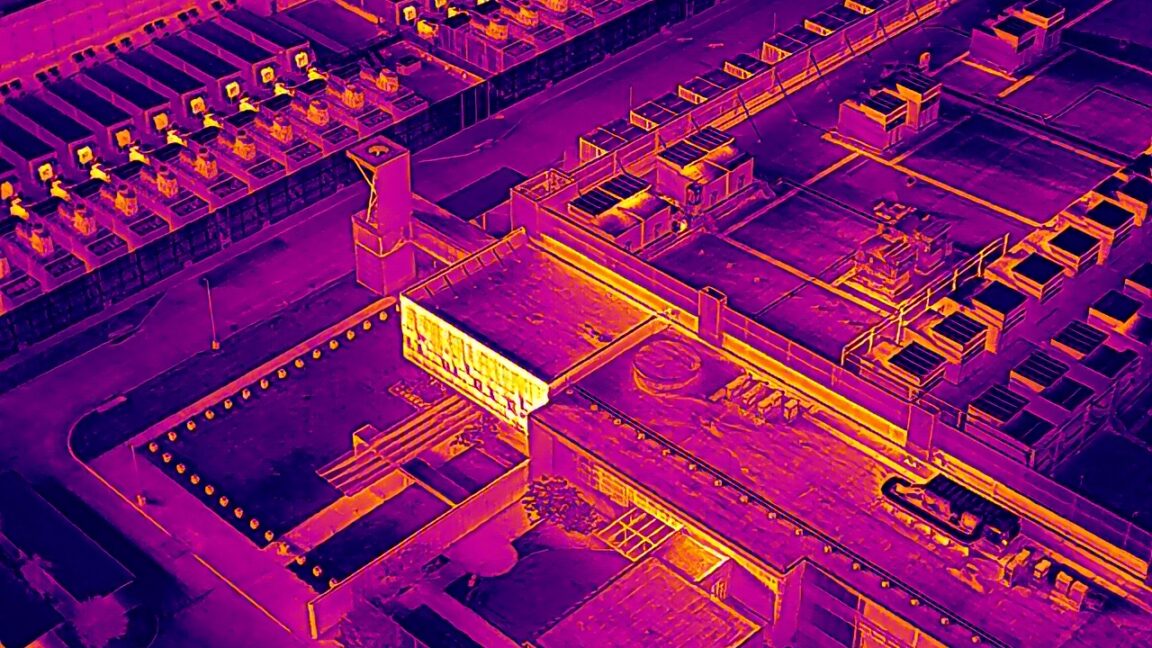

Construction Delays Impact 40% of US Data Centers Planned for 2026

Recent satellite and drone imagery has revealed significant construction delays affecting nearly…

10h ago

Tinder Expands Identity Verification with Sam Altman's Orb Technology

Tinder is introducing a new identity verification feature that requires users to visit physical…

10h ago

OpenAI Executive Kevin Weil Is Leaving the Company

Kevin Weil, a former VP at Instagram, is leaving OpenAI, where he led the AI science application.…

10h ago

OpenAI's Sora Team Leader Bill Peebles Departs Amid Strategic Shift

Bill Peebles, the leader of OpenAI's Sora video generation tool, has announced his departure from…

16h ago